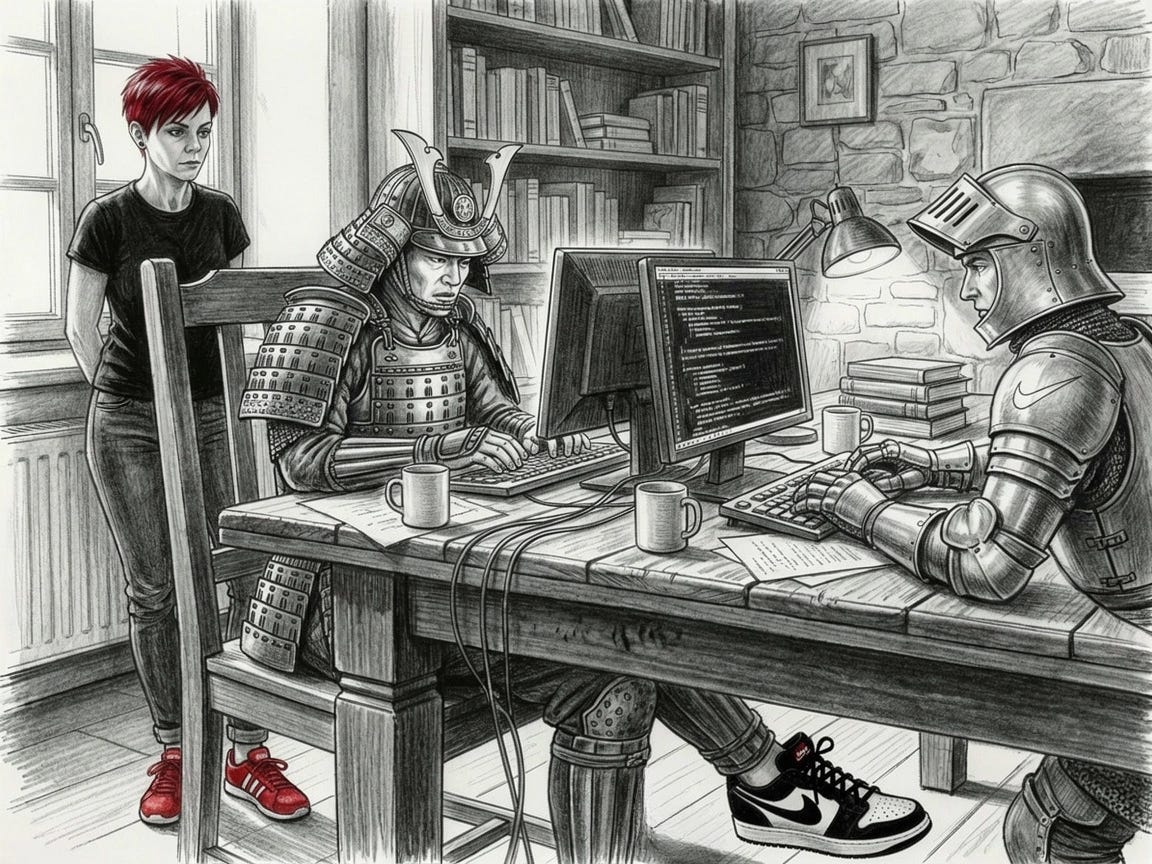

The code works fine, the humans keep breaking things

Mental models for code reviews, collaboration, and your blind spots

Your PR has been open for three days. You got twelve comments. All about variable naming. Three more about single quotes versus double quotes. And the one that makes your left eye twitch: requesting a blank line between functions.

Zero comments about whether the caching strategy will corrupt data when the cache and database get out of sync.

Zero about the race condition you’re pretty sure exists but couldn’t reproduce.

You fix the variable names, swallow your annoyance, add the blank lines. Merge it.

Three weeks later, the caching strategy causes data corruption in production. Everyone reviewed it and nobody actually reviewed it.

The code was the easy part. The humans were the problem. (Btw. this is a case of bike-shedding. More on that later.)

Previous essay covered technical decisions, especially how to diagnose problems, build solutions, think through consequences and the models that help you build the right thing the right way.

This one is about the other half: working with humans who have different context, different assumptions, different blind spots. And recognizing when you’re the one missing something.

These models help make collaboration less painful — for you and for them.

I’ll start with code reviews and feedback, since you encounter them daily and this is your most frequent touch point with other human beings you share the code with.

Code reviews and feedback

Give feedback that actually helps

I’ve seen code reviews range from thorough testing and detailed context to thumbs-up emoji and “LGTM.” My favorite: 3,000 lines of breaking changes with “Sorry, a lot of code. Good luck reviewing this.”

Curse of knowledge

You spent three days implementing the feature. You understand the data flow, the edge cases, the performance tradeoffs. You know why you chose this approach over the alternatives. You know which parts are temporary hacks and which are intentional design decisions.

The code reviewer sees none of this. They see 500 lines of changes across 12 files with a PR description that says “Add user filtering.”

You’re frustrated because they don’t seem to understand the obvious elegance of your solution. They’re frustrated the code makes no sense. Neither of you is wrong. You’re both suffering from the curse of knowledge.

The curse is that once you know something, you can’t unknow it. You can’t remember what it’s like to not understand. What’s obvious to you after three days of implementation is incomprehensible to someone seeing it for the first time.

This shows up everywhere in code reviews. You write a function that’s perfectly clear to you because you know the domain model. The reviewer asks basic questions that feel like they’re not paying attention. They’re not being difficult. They genuinely don’t have the context you spent days acquiring.

Or you’re the reviewer and can’t figure out why they did something the complicated way. You suggest the obvious simplification. Doh.

But after they explain the edge case you didn’t know about, the requirement that isn’t documented, and the reason the simple approach doesn’t work, you get your a-ha moment. You didn’t see it because you didn’t have their knowledge.

The curse works both ways.

You can’t see what’s unclear because it’s all clear to you.

They can’t see what’s obvious because they don’t have your context.

The other person isn’t lazy, incompetent, or difficult. They’re just missing context.

A helpful PR description answers the questions the reviewer doesn’t know to ask. What broke? Why this fix instead of the obvious one? What did you try that didn’t work? What’s going to look weird but is intentional?

“Add user filtering” gives them nothing. “Add user filtering because support is drowning in requests for this, tried X but it broke Y, so using Z approach” gives them the context you spent three days acquiring.

Questions reveal gaps better than suggestions. “Can you help me understand why we’re doing X?” opens a conversation. “This should be Y” starts an argument (that can become a war with keyboard warriors).

You might be right that it should be Y. Or you’re missing the context that makes X necessary.

The curse of knowledge is invisible to you. You don’t know what you know that others don’t know.

Chesterton’s fence

I talked about Chesterton’s fence before, in the context of legacy processes and organizational decisions. The same principle applies to code.

Before you remove something, understand why it was put there.

If you see a fence across a road and don’t know why it exists, don’t tear it down. Maybe it’s pointless. Maybe it’s preventing cattle from wandering. You don’t know until you ask.

You’re reviewing a PR. There’s a weird check: if (data && data.items && data.items.length > 0) when a simple if (data.items?.length) would work. Looks like the author doesn’t know modern JavaScript. You suggest the cleanup.

They explain that production data sometimes has items: null instead of an empty array. The old API returned null, the new API returns empty arrays, but during migration both formats exist. The verbose check handles both. Your improvement would crash on legacy data.

Yep, the fence was there for a reason.

Or you’re refactoring old code and find a function that seems to do nothing. Just passes data through with what looks like unnecessary transformation. You delete it. Tests pass. You ship it. Three days later, reports are broken. The unnecessary transformation was handling a specific date format from an external API that only shows up in monthly reports. The daily reports use a different format. Your tests only covered daily data.

In code, the fence is any logic that seems wrong, unnecessary, or overly complicated. The check that looks redundant, the comment that seems obvious, the abstraction that feels like overkill, or the duplicate code that should be DRYed up.

Someone put it there. Maybe they were wrong, but maybe they were solving a problem you haven’t encountered yet. Maybe there’s a bug that only triggers in production. Maybe there’s a requirement that isn’t documented. Maybe there’s an edge case in data you haven’t seen.

I’ve seen engineers remove dead code that was handling a quarterly batch job. Delete unnecessary validation that was preventing a security issue. Simplify overcomplicated logic that was working around a database limitation. The code looked wrong because they didn’t have the context.

The shortest path to investigate is git blame, find the PR that added it, talk to the person who wrote it (if they’re still around), or search for related bug reports or incidents.

If you can’t find a reason and it seems genuinely pointless, the safer move is proposing removal while acknowledging uncertainty.

The code might be bad. Or you might not understand the problem it’s solving. Worth finding out which before tearing down the fence.

Hanlon’s Razor

Engineer submits a PR with a for loop instead of the functional map/filter chain you’d use. Your first thought: they don’t understand functional programming. Your comment: “This should use map/filter.”

When they respond, you learn that the dataset can be 100k items and the functional approach creates intermediate arrays that blow up memory. They profiled it. The for loop is 10x faster and uses a fraction of the memory.

You assumed incompetence, but the actual reason was performance optimization based on production data. Apology accepted.

There’s a better approach here—one that keeps code reviews collaborative instead of adversarial. Use Hanlon’s Razor (sounds familiar?) and interpret someone’s actions in the most reasonable way possible before assuming the worst.

If their code looks wrong, assume they had a reason before assuming they’re careless. Never attribute to malice or incompetence that which is adequately explained by different information, constraints, or incentives.

Someone uses a library you think is overkill for the problem. Maybe they know the requirements are expanding next quarter and the library handles the future cases. Maybe they tried the simple approach and hit limitations. Maybe there’s context you’re missing.

The opposite is assuming the worst possible interpretation.

“They wrote this because they’re lazy.”

“They didn’t follow the pattern because they don’t care about consistency.”

“They hardcoded this because they can’t think abstractly.”

Sometimes people are lazy. Sometimes they don’t care. But starting there kills useful discussion. They get defensive. You get frustrated. And the code? Doesn’t improve.

Start with thinking they must have had a reason. Ask what it was.

“I’m curious why you chose this approach instead of X?” is way more productive than “This should be X.”

The first one opens a conversation. The second one, if not careful, could escalate into a war.

Yes, maybe their reason is bad, or maybe they didn’t think it through. But asking gives them a chance to explain. If the explanation doesn’t make sense, you can discuss it. If they don’t have a good reason, they’ll often realize it themselves when they try to articulate it. The human version of rubber duck debugging works in code reviews too.

Charity doesn’t mean accepting bad code. It means assuming the person is trying to do good work and starting from there. Ask first, judge second. This way you’ll have to buy lunch less frequently.

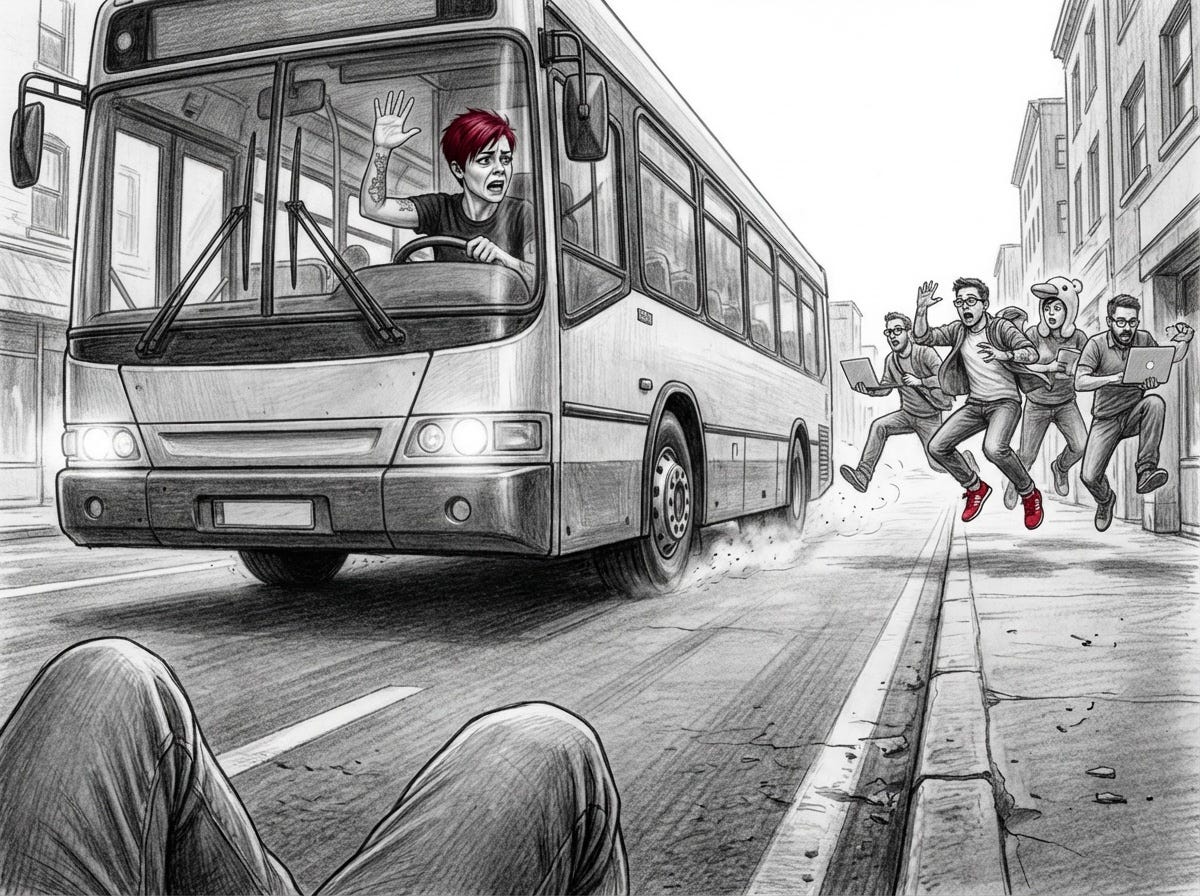

Bike-shedding

Remember that PR from the opening? Three days in review, twelve comments about variable naming, zero about the caching strategy that eventually corrupted production data? That’s bike-shedding.

Here’s another: A PR comes in with a database migration to add a new payment processing table. Changes the schema, adds foreign key constraints, includes a data migration script that will run against production. If this goes wrong, payment data could be corrupted or lost.

But the review? Eight comments about table naming conventions. Four about whether to use snake_case or camelCase for column names. Two about index naming patterns. Zero about whether the migration is reversible. Zero about what happens if it fails halfway through. Zero about whether the foreign key constraints will lock the table during deployment.

Everyone has an opinion on naming conventions. Nobody wants to admit they’re not entirely sure how the migration rollback works.

Bike-shedding is spending disproportionate time on trivial decisions because they’re easy to have opinions about. The term comes from a committee designing a nuclear power plant spending hours debating what color to paint the bike shed while approving the reactor design in minutes. Everyone understands bike sheds. Nuclear reactors are complicated.

In code reviews, bike-shedding looks like focusing on style, formatting, naming, and other surface issues while ignoring architecture, security, performance, and correctness. The trivial stuff is easy to comment on. The hard stuff requires thought.

You’re doing it too. You see a complex PR, you’re not entirely sure you understand all the implications, so you comment on the parts you do understand. Formatting. Naming. Simple logic. It feels productive. You’re contributing to the review. (You’re not.) You’re avoiding the hard questions. Commenting on variable names is easier than admitting that you don’t fully understand how this refund flow works.

When getting only trivial comments as the author, asking explicitly for the hard review helps.

“This migration is risky. Please review the rollback strategy and error handling carefully.”

Tells reviewers where to focus instead of letting them debate naming conventions for an hour.

If you see lots of comments about formatting and zero about correctness, this is a clear sign of bike-shedding. Nobody really reviewed the hard parts. Everyone’s just hoping someone else did.

Good news: now you know how to spot this pattern, you can be the one who breaks it.

Collaboration

Work effectively with humans who aren’t reviewing your code

Code reviews are only one form of collaboration. The rest happens when you’re debugging, coordinating across teams, explaining what you’re building to people who don’t write code.

I know—you’d rather lock yourself in a dark room with the lights off and just code. “Just let me work. Just let me write code.” But you can’t. (It’s a whole aesthetic.) The work requires talking to people who have different context, different constraints, different information than you do.

These models help you do that without wanting to crawl back into the dark room.

Rubber duck debugging

Someone on my team gets stuck. I notice and ask if they need my help. They might smirk, because I don’t know the details of what they’re working on. But I don’t need to. I ask them to walk me through it. They start explaining. I listen without interrupting. Or if opportunity arises, I ask what sounds like a stupid question: “Wait, so when does this error actually happen?” or “What are you expecting to see instead?”

These questions are never as stupid as they sound. They’re usually the question the person forgot to ask themselves, or they break the loop of thought they’ve caught themselves into.

Halfway through explaining, they stop. “Hmmm. I think I know what it is....” They’ve hit their aha moment. The mental barrier breaks. I didn’t solve their problem. They solved it by having to explain it clearly enough for someone who doesn’t know the context. No need to finish this conversation. Let them pursue the next clue. If they look focused, quietly leave.

This is rubber duck debugging. The classic version involves explaining problems to a literal rubber duck on your desk.

The human version is better. And more convenient. You will look less like a nerd talking to a person than talking to an inanimate rubber object.

Why it works? Explaining a problem out loud forces you to organize your thoughts. You think you understand it. Then you try to explain it and realize you’re unclear about a key detail. Or you’re making an assumption that doesn’t hold. Or you’re debugging the wrong part of the system. Speaking it out loud makes the confusion visible.

It works even when the listener doesn’t know the domain.

Being the listener means not solving the problem. It means asking clarifying questions and giving the person space to think out loud. Simple questions often trigger them to see what they missed. Sometimes the best help is just being there while someone works through it themselves.

When you’re stuck, find someone. A coworker. A manager. Someone who has no idea what you’re talking about. Explain the problem like they know nothing about it. Odds are you’ll solve it before you finish explaining.

Information asymmetry

PM files a request: “Users on the higher plan should be able to turn their own favicons on or off. Just add a toggle.”

Backend starts implementing. They dig into the code. Turns out this favicon functionality depends on three other toggles with mutually exclusive logic rules. It’s not just a toggle. They’d have to add completely new functionality and untangle a web of interconnected conditions.

Backend estimates it will take a couple of days to properly rewrite the rules.

PM response: “Why is this taking so long? It should be an hour.”

PM’s manager agrees. It’s one toggle. What’s the problem?

Three meetings and detailed code walkthroughs later, everyone finally understands: if you want this “simple toggle,” you have to unravel years of tangled conditional logic.

This is information asymmetry. You each have context the other doesn’t. PM knows what users need. Backend knows the technical reality. Neither of you knows what the other knows.

This happens everywhere in engineering. Product knows user needs but not technical constraints. Backend knows database limitations but not what the frontend is trying to build. Infrastructure knows deployment complexity but not why the feature is urgent. Everyone operates with incomplete information and assumes the other side sees what they see.

There are meetings and there are meetings. More meetings won’t save you from information asymmetry, but better ones might. Or better yet: provide context in task descriptions and specs. Include it in kickoff meetings when explaining what you’re building and why, so engineers understand the problem space and constraints before they start with the implementation.

“We need this toggle because higher-tier users are asking for more control over their branding. It’s part of the enterprise package we’re launching next quarter.” Now backend knows why it matters. They might suggest: “We can ship a simplified version now and refactor the toggle system properly over the next sprint.” You get a solution. They avoid rushing a mess into production. Everyone wins because the context was shared.

Or you’re debugging a cross-team issue. Frontend says the API is returning errors. Backend says they’re seeing successful requests in the logs. You’re both looking at different data. Frontend is looking at client-side errors from network failures. Backend is looking at server logs that only capture requests that reached the server. The errors are happening before the request gets there. Neither team had visibility into the full picture.

Information asymmetry makes collaboration slow and frustrating. You repeat questions because the answer assumed context you don’t have. You make decisions that seem obvious to you but break things for another team. You wait for responses to questions that could have been answered in the original message if the context had been included.

Sharing context proactively closes the gap. Explaining why when asking for something. Documenting what you considered when making decisions. Sharing what you’re seeing when something goes wrong, not just your conclusion.

The other team isn’t being difficult. They’re working with different information.

Brooks’s law

Project is late. You’re two months behind schedule and the deadline isn’t moving. Management’s solution: add three more engineers to the team. Get this thing shipped. Surely more people means more throughput, right?

(It does not. And nine women still can’t give a birth to a child in one month.)

The new engineers join. You spend the first week getting them set up. Development environments. Access to systems. Explaining the architecture. Answering basic questions about how things work.

Second week: they’re trying to contribute but their PRs need heavy review. They don’t understand the domain deep enough. You’re spending more time reviewing their code than you would have spent just writing it yourself.

Third week: they introduce a bug because they didn’t know about a critical edge case. You spend two days debugging and fixing it. You’re now further behind than when they joined.

Brooks’s law from The Mythical Man-Month (1975) states that adding people to a late project makes it... later. Math is hard when it involves humans. The ramp-up time and communication overhead outweigh the productivity gains. This is counterintuitive. More people should mean more work getting done. In software, it often means less.

Every person you add increases communication paths exponentially. Three people mean 3 communication paths. Four people are 6 paths. Five people are 10 paths. Ten people: 45 paths. The coordination overhead grows faster than the productivity.

New team members need training. They need context. They need to understand the codebase, the domain, the team practices, the deployment process, the debugging techniques. Someone has to provide that. Usually the people who are already behind.

This doesn’t mean never add people. It means adding people has a cost and a delay before you see benefits. If you’re three months behind and add people, expect them to slow you down for the first month, break even in the second month, and start helping in the third month. If your deadline is in two months, adding people makes you miss it.

What actually helps late projects is (surprise, surprise!) cutting scope, extending deadlines, or fixing blockers that slow down the existing team. More people helps future projects. It doesn’t help the fire you’re currently fighting.

If you’re already behind and they’re adding team members anyway, set expectations. The new people will slow you down initially. You’ll miss the deadline you’re already going to miss, just by more. Then, three months from now when you’re working on the next thing, you’ll have a bigger team that’s actually productive. Tell management this now so they can act surprised later when it happens exactly as predicted.

Adding people is an investment in future capacity, not a fix for current delays.

Bus factor

Your senior engineer goes on vacation for two weeks. On day three, the authentication service breaks. Nobody else on the team knows how it works. The person on vacation wrote it. They’re the only one who’s touched it. They’re unreachable because they’re actually on vacation, which you’re now ruining with urgent Slack messages and desperate SMS messages.

In this case your bus factor is one. If that engineer gets hit by a bus (or goes on vacation, or quits, or gets sick), you’re in trouble. The bus doesn’t even need to be literal. A two-week vacation will do the same, but luckily it is less terminal.

When you figure out how many people need to disappear before your project fails, this is your bus factor. It’s morbid but accurate. If the answer is one, you have a single point of failure in your team. Time to add some redundancy.

This happens with critical systems that one person maintains, but it also happens with knowledge. The person who knows why the database is configured this way or the person who understands the contract with the external API, or the person who can debug the performance issues because they profiled the system two years ago. When they leave, that knowledge leaves with them.

Having experts own their domains feels efficient. It’s also fragile. Everything works perfectly until the expert goes on vacation... or decides to go on a different kind of vacation... like in another company? If you figure out then that you have a problem, well, you definitely have a big problem. Most likely the world won’t stop spinning, but it will slow down the company significantly.

Redundancy or at least partial overlaps of knowledge/ownership of critical systems are a must. Non-negotiable.

If you can’t get leadership support, do a bus factor dry-run: check out for a couple days (but keep an eye on Slack) and let them figure out how to solve things. Make it their problem, because ultimately it is their problem.

High bus factor isn’t about everyone knowing everything. It’s about critical things being understood by at least two people. The authentication system should have a primary owner and a secondary who’s contributed to it, reviewed the code, and could maintain it if needed. The deployment process should be documented well enough that two people can run it.

Pair programming helps. Code reviews help. Documentation helps. Cross-training helps. Rotating responsibilities helps. Anything that spreads knowledge from one person to at least one other person.

You know your bus factor is too low when you think I can’t take vacation because nobody else can handle X or when someone leaves and you panic about what they were working on.

When the bus factor for something critical is one, it needs fixing before the bus shows up. Have someone else pair on the next change. Document how it works. Do a knowledge transfer. Get the count to two.

One is not a team. It’s a dependency. A critical one.

Premature consensus

Team meeting to decide on the new architecture. The tech lead suggests microservices. Everyone nods. Sounds good. Makes sense. Let’s do it.

Meeting done in under an hour. Everyone’s aligned. You give yourself a pat on the back. Very efficient. We must be geniuses. Efficient geniuses.

Six months later, you’re drowning in service coordination complexity, distributed debugging, and deployment orchestration. Someone asks: “Why did we go with microservices again?” Nobody has a good answer. Everyone agreed at the time. Efficiently agreed, even. (Very efficient disaster.)

So what happened? Premature consensus. Agreeing without actually discussing. It looks like alignment but it’s really conflict avoidance. Nobody wants to be the person who slows down the meeting by asking hard questions. Nobody wants to disagree with the tech lead. So everyone says yes and you move forward with a decision that wasn’t really examined.

You see this in architecture decisions, tooling choices, process changes. Someone proposes something. It sounds reasonable. Nobody has strong objections they’re willing to voice. Agreement. Done. You like to move fast after all.

So, where’s the problem? The problem is that agreement without discussion creates fragile decisions. You didn’t explore alternatives. You didn’t stress-test the assumptions. You didn’t think through the second-order effects. You just said yes because it was easier than saying wait, let’s think about this.

Six months later when the decision turns out poorly, nobody remembers why you made it. It seemed like a good idea at the time is the post-mortem conclusion.

Real consensus requires disagreement first. Someone needs to ask: “What are we giving up with this approach? What are the alternatives? What could go wrong? Why is this better than the simpler option?”

If nobody asks those questions, you’re not getting real buy-in. You’re getting polite compliance.

The tech lead suggests microservices. Someone asks: “What problem are we solving that requires microservices? Would we be better off with a modular monolith first?” Now you’re discussing tradeoffs. Someone else: “How will we handle distributed debugging?” Now you’re thinking through operational complexity. Someone: “Do we have the team size to maintain multiple services?” Now you’re considering capacity.

Maybe you still choose microservices. But now you’ve examined alternatives, considered the costs, and thought through the problems. The decision is stronger because it survived scrutiny.

Fast agreement in meetings is a signal. It often means people aren’t thinking it through. When major technical decisions get consensus in under 30 minutes, scrutiny is probably missing.

Disagreement isn’t bad. It’s how you find the holes in the plan before you build it. Refresh your memory about mental models like Devil’s advocate that can help you with intentional dissent and detect groupthink.

Self-assessment and blind spots

Know what you don’t know

You’re most dangerous when you don’t know what you don’t know. Camping on Mt. Stupid hurts you and everyone around you.

Dunning-Kruger effect

I’ve touched upon Dunning-Kruger effect in the essay for leaders, but it’s worth revisiting for ICs.

Sometimes it will seem like you’re ascending and descending its slopes daily, especially when working on demanding and complex problems.

In case you haven’t heard about it: Dunning-Kruger effect describes how you’re most confident when you know the least. Beginners overestimate their competence because they don’t know enough to recognize what they’re missing. Experts underestimate their competence because they know how much they don’t know.

You learn the basics of a technology and think you’ve mastered it. But you’ve learned enough to be dangerous. You can build something that works. You haven’t learned how to build something that works at scale, or handles edge cases, or performs well, or is maintainable.

Junior engineer learns SQL and thinks databases are simple. Writes queries that work in development with 100 rows. In production with 10 million rows, everything times out. They didn’t know about indexes, query planning, or database optimization.

Or you read about microservices and decide your monolith should be decomposed. You haven’t dealt with distributed debugging, service coordination, eventual consistency, or deployment orchestration. You know the benefits. You don’t know the costs because you haven’t experienced them. YOLO. (No, please don’t YOLO on this one.)

Confidence peaks early when you know just enough to build something. Then it drops as you discover how much you don’t know. Eventually it rises again as you develop expertise.

The dangerous zone is the peak also called Mount Stupid. You know enough to make decisions but not enough to make good ones. You’re confident but incompetent. This is when you propose rewriting the entire backend in the new framework you learned last week.

If you’re feeling very confident about something you learned recently, you’re probably on the peak. I just learned X and it seems straightforward. If someone with more experience snorts at this statement, you’re definitely there. The moment you think you’ve mastered something is probably the moment you’ve barely started understanding it. The descent through the valley of despair is inevitable. The climb back up the slope of enlightenment just comes with more humility.

When Ikea effect and NIH syndrome have a baby

Three weeks building a custom state management solution. It handles your exact use case perfectly. Someone suggests using Redux. Your reaction: Redux is overkill. My solution is simpler and works better for what we need.

Is it actually better? Or do you just think it’s better because you built it? Hard to know when you’re the one who built it.

You might be under the influence of Ikea effect bias. You overvalue things you created yourself. The effort you put in makes you attached to the outcome, even when objectively better alternatives exist.

You built the furniture yourself. It’s wobbly and the drawers don’t close right, but you love it because you made it. Someone suggests buying a better one. No way. This one is special. (You know which drawer needs the exact angle to open. That’s craftsmanship.)

Same with code. You wrote a library that solves a problem. It works. Someone points out there’s a well-maintained open source library that does the same thing, handles more edge cases, and has better documentation. You defend your library. It’s lighter weight. We control it. It’s tailored to our needs.

Maybe those reasons are valid. Maybe you’re just attached to your creation.

This is why engineers resist throwing away their code. You spent days or weeks building it. Deleting it feels like wasting that effort. So you keep it even when it’s not the best solution.

Or you’re refactoring and someone suggests removing a complex abstraction you built. Your first instinct is to defend it. It’s needed for future extensibility. Is it though? Or did you spend time building it and now you can’t let it go?

The Ikea effect makes you blind to better alternatives. You’ve invested effort, so you rationalize why your solution is correct instead of objectively evaluating it. Loss aversion kicks in.

Would you choose this solution if you hadn’t built it yourself? If someone else wrote this library or this abstraction, would you use it? If the honest answer is no, the Ikea effect is at work.

The length of your defense is a signal. When you find yourself explaining at length why your custom solution is better than the standard tool, you’re rationalizing, not evaluating. Let someone else look at it. They don’t have your attachment.

The code is a tool, not a baby. The effort spent building it taught you something. That was the value. The code itself is replaceable.

Team needs a solution for handling background jobs. There are mature, well-tested libraries: Sidekiq, Bull, Celery. Your team decides to build their own.

“We have specific requirements.” (Translation: “We want to build it ourselves.”)

“The existing tools are too heavy.” (Translation: “We haven’t actually evaluated them.”)

“It’ll be simpler to build exactly what we need.” (It will not be simpler.)

Six months later, you’re debugging race conditions, handling failed jobs, implementing retry logic, building monitoring, and dealing with edge cases the existing libraries already solved. You’ve most likely rebuilt a worse version of something that already existed.

Not invented here (NIH) syndrome is rejecting external solutions because you didn’t build them. AKA if we didn’t make it, it can’t be right for us.

This is different from the Ikea effect (overvaluing what you built). This is refusing to use what others built.

You see a library that solves your problem. Your first instinct: How hard can it be to build this ourselves? Often the answer is harder than you think. The library handles cases you haven’t encountered yet. It’s been battle-tested in production. It has community support and documentation. Your version will have none of that.

But you build it anyway. Building your own is more satisfying than using someone else’s solution.

Or another team at your company built a tool that could work for you. You reject it: Their use case is different. It’s not exactly what we need. We’d have to change our workflow. So you build your own. Now you have two tools doing the same thing, both with partial solutions, neither as good as if you’d combined efforts.

The cost isn’t just the time building it. It’s the ongoing maintenance, the bugs you’ll hit that the mature tool already fixed, the features you’ll eventually need that the existing tool already has.

Sometimes building is the right choice. If the existing tools truly don’t fit your requirements. If the dependency adds more complexity than building. If you need something so specific that customization would be harder than starting fresh. Sometimes.

But most of the time, the appeal of building outweighs the practical case for it. Which is fine for side projects. Less fine when you’re six months in, debugging race conditions the mature library already solved.

If the existing solution came from inside your company instead of outside, would you use it? If yes, the problem isn’t the solution, but more likely where it came from.

Boring technology and proven libraries maintained by other people, that’s how you free up effort for problems unique to what you’re building. Let’s keep Ikea effect from having babies with NIH syndrome, nothing good will come of this.

Peak-end rule

Your memory doesn’t average experiences. It anchors on peaks and endings.

Six months on a project. Most of it was fine. Normal progress, normal challenges, nothing dramatic. Then the last two weeks were a disaster. The deployment failed three times. You worked late nights debugging and the launch was rocky.

When someone asks how the project went, you say: It was rough. You remember the disaster, not the six months of steady work. Those six months might as well not have happened. Your brain decided the last two weeks are the whole story. (If they’d asked in month four, you’d have said it was fine. But they didn’t ask in month four.)

This affects how you evaluate everything. A colleague is helpful for months, then snippy in one code review. You remember them as difficult. A project goes smoothly for weeks, then hits one major issue. You remember it as problematic.

The reverse too. A project is a grind but the launch goes well. You remember it as a success. A teammate is frustrating to work with but comes through in a crisis. You remember them as reliable.

You’re doing this to yourself too. You worked hard all quarter, shipped three features, then made one mistake at the end. When performance review comes, you focus on the mistake. Your manager might remember the steady delivery. You remember the peak moment of stress.

Or you’re debugging an issue for hours. Frustrating, stuck, making no progress. Then you solve it in the last fifteen minutes. The experience feels satisfying because it ended well. If you’d given up at hour three, you’d remember it as wasted time. Same three hours, different ending, completely different memory.

Last impressions matter in code reviews, project retrospectives, and team interactions. The last thing that happens shapes how the entire experience is remembered.

The ending matters disproportionately. Meetings that end with clear next steps feel productive. Code reviews that end with acknowledgment of what worked well feel collaborative. Retrospectives that acknowledge what went right before diving into problems leave people energized instead of demoralized. Same content, different order, completely different memory.

When evaluating something, the average should matter more than the peaks and ending. Was the project actually bad, or did it just end badly? Is the person actually difficult, or did they just have one rough interaction?

Your memory is a highlight reel, not a documentary. Make sure you’re judging the full story, not just the clips that stand out. No need to treat the work as Instagram.

I’m repeating myself

You probably don’t need all of these at once. Pick one model. Notice when it applies. Course-correct before the pattern becomes expensive. Yeah, yeah, I’m repeating myself.

You can’t eliminate these patterns. They’re human. The goal is catching them earlier.

Engineering isn’t just solving technical problems. It’s collaborating on solutions, reviewing each other’s work, and being honest about what you know and don’t know.

Now go build something great together.

This is brillant stuff! The bikeshedding part realy hit home for me. I dunno why but we always get stuck debating variable names while missing the actual architecture issues, I've seen PRs with 20 comments about spacing and zero about whether the approach even makes sense. It's almost like we avoid the hard stuff by focusing on what's easy to opinionate about.